For saying that I work in technology, I feel embarrassingly late to this party. I was recently transfixed by posts on Mastodon that showed images generated by Midjourney. I’d never heard of Midjourney. This started me off down a rabbit hole.

A few metres down the rabbit hole, I read about InvokeAI, an open-source alternative to Midjourney. A few metres more and I discovered that I would be able to run InvokeAI on my PC, which is equipped with an NVIDIA GeForce RTX 3060 graphics card.

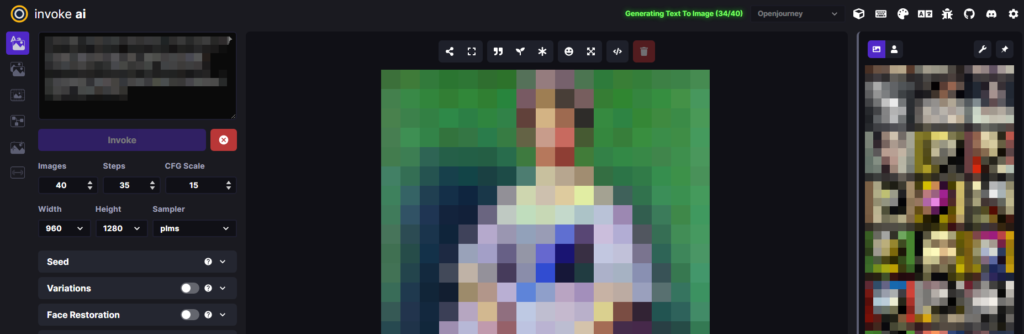

That’s how I found myself installing and running InvokeAI, despite still knowing virtually nothing about any facet of AI, let alone image generation. Faced with the InvokeAI web interface, the first question becomes “What do all these knobs and buttons do? What are “CFG Scale”, “Sampler” and “Steps”? What is the difference between the “Models”?

In case you find yourself in the same position, here’s a handy guide.

What is Midjourney?

Midjourney is an independent research lab that produces a proprietary artificial intelligence program under the same name that creates images from textual prompts. It is powered by AI and machine learning algorithms and uses text prompts and parameters to generate images. It can be used for both creative and practical applications, such as creating custom artwork and logos, or visualising data. Midjourney is constantly learning and improving, and can be accessed through the Discord chat app by messaging the Midjourney Bot.

What is Stable Diffusion?

Stable Diffusion is an image-generating model developed by Stability AI. It is powered by artificial intelligence and machine learning algorithms, and uses text prompts and parameters to generate images. It can be used for both creative and practical applications, such as creating custom artwork and logos, or visualising data. Stable Diffusion is open-source, meaning anyone can access and use the model without cost. The model has a relatively good understanding of contemporary artistic styles, making it a popular choice for creative applications.

Stable Diffusion is based on the concept of a CFG Scale (see below), which is a mathematical representation of the complexity of a system, which can be used to analyze the behavior of the system over time. The CFG Scale is used to measure the stability of a system, which is an important factor in understanding how the system evolves over time.

The Stable Diffusion algorithm uses a “sampler” (see below) to collect data from the system at different points in time. The algorithm then uses a model to analyse the data collected from the sampler.

What’s InvokeAI then?

InvokeAI is an implementation of Stable Diffusion. It is powered by artificial intelligence and machine learning algorithms, and uses text prompts and parameters to generate images. It is optimised for efficiency, and can generate images with relatively low VRAM requirements. It supports the use of custom models.

The InvokeAI web interface offers lots of parameters. The rest of this article is dedicated to explaining those parameters.

CFG Scale

The CFG Scale, or Classifier-Free Guidance Scale, is a parameter in the Stable Diffusion image generation model. It controls how much the image generation process is guided by the initial input, and how much it is random. (It determines the amount of noise in the generated image.) A high CFG Scale value produces output more closely resembling the text prompt.

A low CFG Scale value in Stable Diffusion can result in the output image having a lower fidelity and quality. It can for example lead to the model generating extra arms and legs! But it also increases the diversity and creativity of the result.

Increasing the CFG Scale value can result in higher quality, as well as a more dynamic colour range. It can also lead to more detailed images with a higher resolution. That said, high CFG values can lead to unrealistic-looking images.

The best CFG Scale value range for Stable Diffusion is generally between 7 and 11. Higher values of 50 to 100 are recommended for good outpainting results.

The Steps parameter

The Steps parameter of Stable Diffusion defines the number of inference steps used in the model. It dictates the amount of detail that is produced when generating images from text descriptions.

Generally, increasing the number of steps will produce higher quality images, but this will come at the expense of slower inference. The optimal number of steps will depend on the dataset and task at hand. In most cases, images will converge on 30-50 steps and will not change significantly with higher steps.

The Sampler parameter

InvokeAI provides several different samplers that can be used to generate samples from a given data distribution. The k_euler sampler uses the Euler-Maruyama algorithm to generate samples from a given distribution. The k_dmp_2 sampler uses a second-order differential equation to generate samples. Each of the samplers has its own advantages and disadvantages, and experimentation will be rewarded.

The sampler is used to select regions of an image and generate a diffusion map that conditions the output of the model. The diffusion map is then used to generate a detailed image conditioned on text descriptions. The sampler also allows for a more efficient and stable training process, as it reduces the number of steps needed to generate a result. Additionally, the sampler can upscale samples from the diffusion model, allowing for better results when generating smaller images.

Prompts

Prompts in Stable Diffusion are phrases or words used to generate AI-generated images. They are used to provide the AI model with guidance on what type of image to create. Prompts can range from abstract concepts to specific objects or scenes. They can be weighted to emphasise certain keywords.

Example prompts include “a picture of a black cat on a kitchen top”, “Paintings of Landscapes”, “Style cue: Steampunk / Clockpunk”, “a beautiful sunset over a beach” or “a futuristic cityscape.” Using very specific prompts, helps the AI to generate more accurate and detailed images.

Different models*

Confusingly, you can use different models with InvokeIA. The most commonly used is the Stable Diffusion Model. InvokeAI can use a range of other models for image processing and manipulation tasks. InvokeAI also provides tools for creating custom models, allowing users to create their own models that can be used in combination with the existing models.

InvokeAI supports a variety of models, from classic Machine Learning algorithms such as Random Forest and Logistic Regression to deep learning models such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs). The main differences between these models are the type of data that they process and the types of result they produce. For example, Random Forest and Logistic Regression are good at predicting classifications, while CNNs and RNNs are better at recognizing patterns in images and text. Additionally, CNNs and RNNs can be used for more complex tasks such as image recognition, text generation, and language translation.

Sneaky disclaimer

So here’s my disclaimer. I’ve pulled a slightly sneaky trick with this blog post. I’ve recruited AI to explain AI. That seemed logical. I used AI text generation services YouChat and Perplexity to explain Stable Diffusion, using prompts like “Explain the CFG Scale in Stable Diffusion.”

That feels like cheating. But I did at least sanity-check the results and edit them before posting here. Some of the answers were in fact wrong. And it was no quicker writing the blog post this way. But roughly 80% of the text in this blog post was AI-generated. How does that make you feel? Tell me in the comments!

*The AI-based text-generators really struggled to explain the difference between the various models that work with InvokeAI. My suggestion is to install some and experiment!